1. Introduction

Robots are no longer confined to repetitive tasks within controlled environments. As they enter human-centered domains like homes, hospitals, warehouses, and urban spaces, their ability to perceive and understand complex and dynamic scenes becomes essential. Computer vision provides robots with the capability to perceive the world visually—emulating, and in some cases surpassing, human visual capabilities.

Just as human eyes feed the brain with visual information to make informed decisions and actions, robotic vision systems capture, process, and interpret visual data to enable intelligent behavior. This includes identifying objects, understanding spatial geometry, recognizing human gestures, and performing real-time interaction with physical environments.

2. The Fundamentals of Computer Vision in Robotics

Computer vision in robotics involves a multi-stage pipeline, starting from image acquisition to decision-making and control. Each stage plays a vital role in building a robot that can perceive its environment effectively.

2.1 Image Acquisition

At the heart of any vision system lies the imaging sensor. Common types include:

- Monocular RGB Cameras: Basic color images, used for classification and detection.

- Stereo Cameras: Provide depth perception by comparing two image streams.

- RGB-D Sensors (e.g., Microsoft Kinect, Intel RealSense): Capture both color and depth, allowing 3D scene understanding.

- Thermal Cameras: Useful in low-light or high-heat environments.

- Event Cameras: Capture changes in a scene at microsecond resolutions for fast motion detection.

2.2 Image Processing and Feature Extraction

This involves preparing raw images for high-level tasks:

- Filtering and Denoising: Removes visual artifacts.

- Edge Detection (e.g., Canny filter): Identifies boundaries and shapes.

- Keypoint Detection (e.g., SIFT, SURF, ORB): Locates distinct features for matching and tracking.

- Segmentation: Isolates different regions of the image (e.g., background vs. object).

2.3 Object Detection and Recognition

Robots must detect and recognize objects to interact meaningfully. Methods include:

- Traditional Methods: Template matching, histogram analysis.

- Deep Learning Models:

- YOLO (You Only Look Once)

- Faster R-CNN

- SSD (Single Shot MultiBox Detector)

These models localize objects within images using bounding boxes and assign class labels.

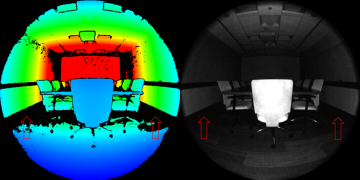

2.4 3D Perception and Scene Reconstruction

Understanding spatial geometry is essential for tasks like navigation and manipulation.

- Stereo Vision: Computes disparity between two images for depth.

- SLAM (Simultaneous Localization and Mapping): Builds maps while estimating the robot’s position.

- Structure from Motion (SfM): Creates 3D models from moving camera views.

- LiDAR Integration: Fuses vision with laser scanning for high-precision 3D mapping.

3. Applications of Computer Vision in Robotics

Computer vision enables robots to function intelligently across diverse domains. Below are key application areas:

3.1 Industrial Robotics

In smart factories, robots equipped with vision perform:

- Assembly Verification: Ensuring components are correctly aligned.

- Quality Inspection: Detecting surface defects or incorrect assembly.

- Vision-Guided Pick-and-Place: Adapting to parts that shift or rotate on conveyor belts.

Computer vision reduces the need for fixed programming and allows for greater flexibility in manufacturing.

3.2 Service and Domestic Robots

For robots in homes or commercial settings:

- Face and Gesture Recognition: Used in social robots for interaction.

- Object Retrieval: Locating and delivering items to users.

- Environmental Mapping: Allowing autonomous movement in cluttered spaces.

Robots like iRobot’s Roomba use vision for area mapping and obstacle avoidance.

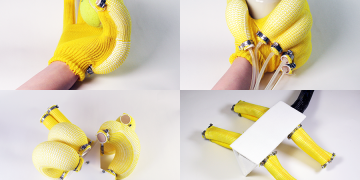

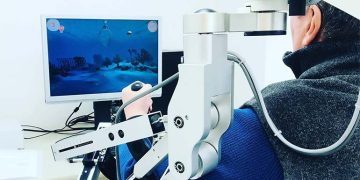

3.3 Medical and Assistive Robotics

Vision enables:

- Minimally Invasive Surgery: Visual servoing guides tools inside the human body.

- Rehabilitation Robotics: Detecting limb position and motion for therapy.

- Elderly Assistance: Recognizing human postures to alert falls or health issues.

3.4 Agricultural Robotics

Vision systems are used in:

- Fruit Detection: Locating ripe produce.

- Weed Removal: Classifying crops versus weeds.

- Yield Estimation: Counting fruits and measuring crop health from aerial images.

3.5 Autonomous Vehicles and Drones

Perhaps the most complex visual systems are used in:

- Autonomous Driving: Lane detection, traffic sign recognition, pedestrian detection.

- UAV Navigation: Obstacle avoidance, visual-inertial odometry, landing site detection.

4. Enabling Technologies Behind Robotic Vision

4.1 Deep Learning

The adoption of Convolutional Neural Networks (CNNs) and Transformers has revolutionized computer vision. Key advantages:

- Learn features automatically from data.

- Generalize well across environments.

- Achieve near-human accuracy in object detection and segmentation.

4.2 Sensor Fusion

Robotic systems often combine vision with:

- Lidar: For long-range 3D mapping.

- IMUs (Inertial Measurement Units): For motion estimation.

- Force/Torque Sensors: For grasp adjustment during object manipulation.

Sensor fusion improves robustness and accuracy, especially in environments where vision alone may be insufficient.

4.3 Edge Computing and Real-Time Processing

With advances in edge AI chips (e.g., NVIDIA Jetson, Intel Movidius), visual inference can now occur on-board robots, reducing latency and enhancing autonomy.

5. Challenges in Robotic Vision

Despite the progress, several challenges remain:

5.1 Environmental Variability

- Lighting changes, motion blur, reflections, and shadows can affect performance.

- Domain adaptation is needed to generalize models across conditions.

5.2 Real-Time Constraints

Robots often require visual processing to occur at 30–60 FPS. Balancing speed and accuracy is an engineering challenge.

5.3 Occlusion and Partial Visibility

Detecting and recognizing objects that are partially blocked or stacked remains complex, especially in cluttered scenes.

5.4 Calibration and Drift

Sensor alignment and calibration errors can accumulate over time, impacting 3D reconstruction and pose estimation.

5.5 Data Requirements

Training deep models requires large, diverse, and annotated datasets, which may not exist for all environments or object classes.

6. Innovations and Future Directions

6.1 Self-Supervised and Few-Shot Learning

Allowing robots to learn from unlabeled data or from very few examples is becoming increasingly viable, reducing reliance on data annotation.

6.2 Active Perception

Robots may move their cameras or sensors to gather the most informative views—enhancing perception through action.

6.3 Vision-Language Models in Robotics

Integrating vision with natural language understanding (e.g., using models like CLIP or Flamingo) allows for instruction-based behavior:

“Pick up the red cup on the left.”

This leads to more intuitive human-robot interaction.

6.4 Embodied AI

Vision is just one modality in embodied intelligence, where perception, cognition, and control are integrated. Computer vision will remain central to these systems.

7. Case Studies

7.1 Amazon Robotics – Item Picking

Amazon’s warehouse robots rely on vision to identify, pick, and place thousands of items per day. Deep learning models are used for object recognition, while stereo vision estimates depth for grasp planning.

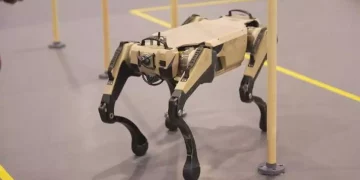

7.2 Boston Dynamics – Mobile Robots

Boston Dynamics’ robots use multiple cameras and 3D perception to walk, navigate stairs, open doors, and manipulate objects—all in dynamic environments.

7.3 Autonomous Vehicles – Tesla and Waymo

Advanced vision stacks with multi-camera arrays and deep neural nets enable real-time scene understanding and path planning in autonomous cars.

8. Conclusion

Computer vision is undoubtedly one of the foundational pillars of modern robotics. It empowers robots not only to “see,” but to interpret, interact with, and adapt to the physical world. Whether in a factory, a home, a field, or a hospital, vision-enabled robots can perceive and respond in ways that were once unimaginable.

Despite the challenges of environmental variability, data dependence, and real-time processing, advancements in deep learning, sensor fusion, and computational power are rapidly bridging the gap between robotic perception and human-like understanding.

As research continues and technologies mature, the future holds immense promise: robots with vision will not only see clearly but also think critically, act precisely, and collaborate meaningfully with humans in an increasingly automated world.