Introduction

In the rapidly advancing field of robotics, one of the most critical components for ensuring the reliable performance of autonomous systems is accurate and real-time positioning. Whether it’s a self-driving car navigating through urban streets, a drone flying autonomously, or a warehouse robot performing logistics tasks, the ability to precisely determine the robot’s location and monitor its motion is paramount. This is where positioning sensors, specifically GPS (Global Positioning System) and IMU (Inertial Measurement Unit), come into play. These technologies work together to provide the necessary data for a robot to understand its surroundings, navigate complex environments, and perform tasks effectively.

This article delves into the working principles, integration strategies, and applications of GPS and IMUs in robotics. We will explore how these sensors complement each other, their limitations, and how advancements in sensor fusion and algorithm development are driving the next generation of autonomous systems.

GPS: The Backbone of Outdoor Localization

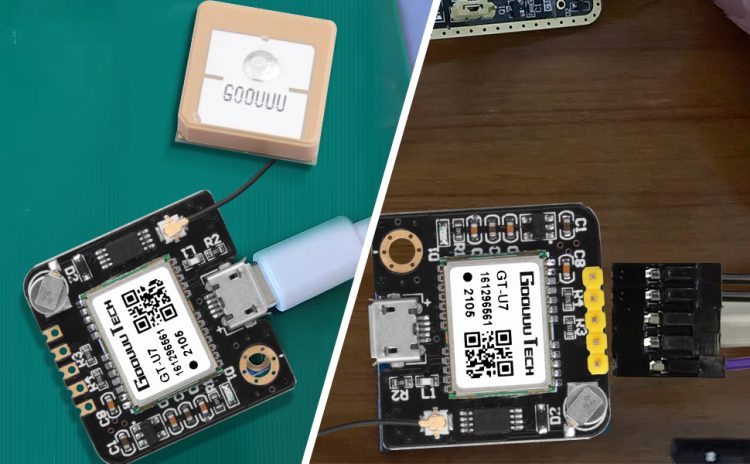

What is GPS?

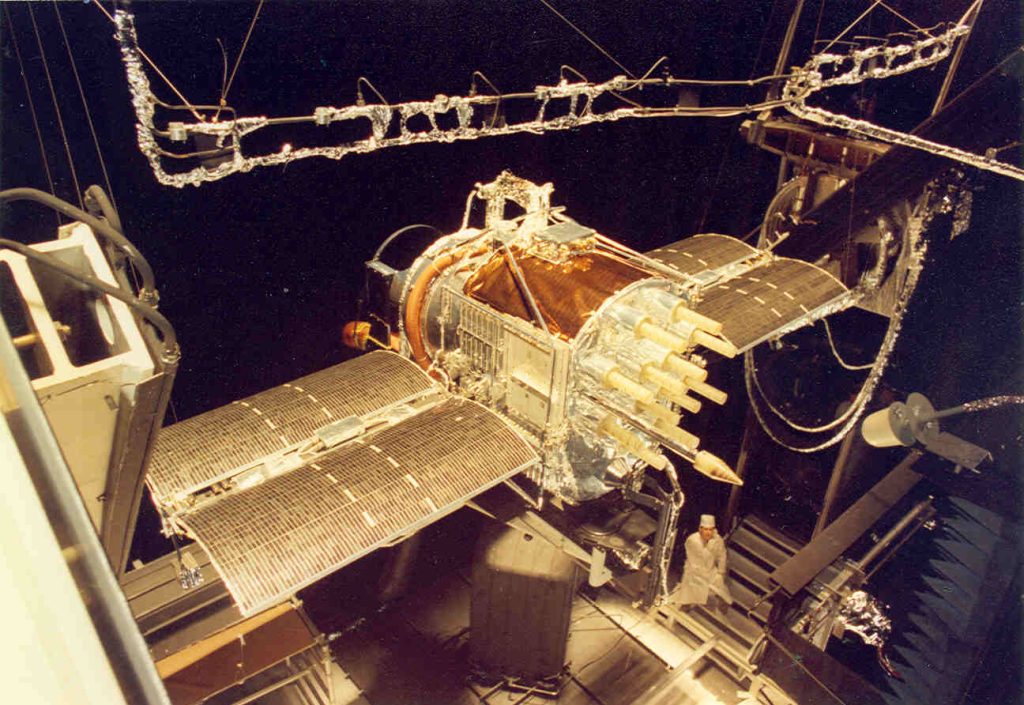

Global Positioning System (GPS) is a satellite-based navigation system that provides geolocation and time information to a GPS receiver anywhere on Earth. It works by triangulating signals from a network of satellites orbiting the Earth. GPS has revolutionized many industries by providing reliable outdoor navigation, and its application in robotics is no exception.

How GPS Works in Robotics

GPS utilizes a constellation of at least 24 satellites that transmit signals, which a receiver on the robot picks up. By measuring the time it takes for the signal to reach the receiver from multiple satellites, the robot can calculate its position with high accuracy.

In autonomous robots, GPS is used primarily for outdoor navigation. For instance, in agricultural robots, drones, and autonomous vehicles, GPS provides real-time location data that allows the robot to traverse vast open spaces while avoiding obstacles and following designated paths.

GPS Accuracy and Challenges

While GPS has the advantage of providing location data over vast distances, its accuracy can be influenced by several factors:

- Signal Obstruction: Tall buildings, dense forests, or indoor environments can block GPS signals, reducing accuracy.

- Multipath Effects: Signals bouncing off surfaces like roads or buildings can lead to errors in the positioning calculations.

- Atmospheric Interference: Weather conditions or solar activity can impact the transmission of GPS signals, leading to inaccuracies.

Despite these challenges, advancements like Differential GPS (DGPS) and Real-Time Kinematic (RTK) GPS systems are improving the accuracy of GPS, especially for robotics applications that require centimeter-level precision.

IMU: Enhancing Motion Sensing

What is an IMU?

An Inertial Measurement Unit (IMU) is a sensor that detects and measures the forces acting upon a robot, including linear accelerations, angular velocities, and sometimes magnetic fields. IMUs typically consist of accelerometers, gyroscopes, and sometimes magnetometers, each providing essential data about the robot’s movement.

- Accelerometer: Measures the rate of change of velocity (acceleration) in one or more directions.

- Gyroscope: Measures the rate of rotation around one or more axes.

- Magnetometer (optional): Measures the magnetic field, often used for orientation with respect to the Earth’s magnetic poles.

How IMUs Work in Robotics

IMUs provide real-time data that can help a robot understand its motion state. By integrating the acceleration data over time, an IMU can calculate changes in position, while gyroscopes provide information about the robot’s rotation. This data is essential for maintaining the robot’s stability, especially in dynamic environments or when GPS signals are unavailable.

In indoor applications, where GPS signals are often too weak or non-existent, IMUs play a critical role in localizing and stabilizing the robot. For instance, in warehouse robots, IMUs help track the robot’s motion and orientation through aisles, ensuring precise movements even in GPS-denied environments.

IMU Accuracy and Challenges

IMUs provide high-frequency data and can be very accurate in the short term. However, over time, errors accumulate due to the integration process, leading to drift. This phenomenon, known as accumulation drift, occurs because small errors in acceleration or angular velocity data become amplified over time, causing the robot’s estimated position and orientation to diverge from its true location.

To counteract this, advanced algorithms like sensor fusion are used to combine IMU data with other sensor data, including GPS, to correct for drift and provide more accurate long-term localization.

Sensor Fusion: Combining GPS and IMU Data

While both GPS and IMUs have individual strengths, they also have inherent weaknesses. GPS is highly accurate over long distances but can be unreliable in certain environments, such as urban canyons or indoor spaces. On the other hand, IMUs are highly reliable in terms of capturing motion data, but their performance degrades over time due to drift.

To address these challenges, sensor fusion techniques combine data from both GPS and IMUs to produce a more accurate and robust estimate of the robot’s position and motion state. The most common sensor fusion technique is Kalman Filtering.

Kalman Filter for Sensor Fusion

A Kalman Filter is a mathematical algorithm used to combine noisy data from multiple sensors to produce an optimal estimate of a system’s state. By incorporating data from both GPS and IMUs, the Kalman filter can correct for the drift of the IMU and compensate for occasional GPS signal loss. This leads to more stable and accurate localization in dynamic environments.

Extended Kalman Filter (EKF)

For nonlinear systems like robots, the Extended Kalman Filter (EKF) is commonly used. EKF adapts the traditional Kalman filter to handle nonlinear relationships between the sensors and the robot’s position, making it ideal for real-time applications in robotics.

Sensor fusion not only enhances localization accuracy but also increases the robot’s robustness by allowing it to function effectively in GPS-denied environments, such as indoors or in areas with heavy interference.

Applications of GPS and IMU in Robotics

Autonomous Vehicles

In autonomous vehicles, the combination of GPS and IMU allows the car to localize itself within a few centimeters on a map, even in environments where GPS signals may be intermittent. The GPS provides coarse localization, while the IMU helps to refine the vehicle’s position through dead reckoning (estimating position based on previous movement), allowing the vehicle to maintain accurate navigation even when GPS signals are blocked by tunnels or tall buildings.

Drone Navigation

Drones often operate in complex environments, where GPS signals can be unreliable due to obstacles like buildings, trees, or crowded airspaces. In these cases, IMUs play a critical role in stabilizing the drone and maintaining its position relative to a target location. The fusion of GPS and IMU data ensures that drones can fly safely in GPS-denied areas and still achieve accurate positioning and smooth motion control.

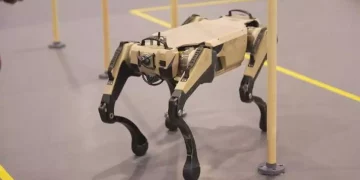

Robotics in Warehouses and Industrial Automation

In industrial environments, robots equipped with GPS and IMUs are tasked with performing highly precise movements. For example, automated guided vehicles (AGVs) in warehouses rely on these sensors to navigate through aisles, pick and drop goods, and avoid collisions. While GPS helps the robot localize itself within the warehouse, the IMU ensures smooth and precise motion control, especially in areas where GPS signals may be weak or unavailable.

Agricultural Robotics

Agricultural robots, such as autonomous tractors and harvesters, rely on GPS to navigate large fields with high precision. IMUs help in detecting and correcting the orientation of the robot to ensure that operations like planting, harvesting, and spraying are carried out accurately. In this context, the combination of GPS and IMUs enables robots to cover vast areas without human intervention while maintaining the precision needed for agricultural tasks.

Future Directions and Advancements

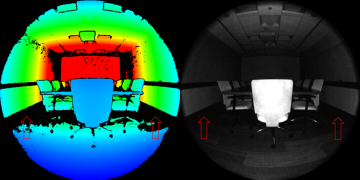

GPS and IMU Integration with Vision Systems

The future of autonomous robots lies in their ability to integrate multiple sensor modalities. Combining GPS, IMU, and computer vision systems can provide robots with a richer understanding of their environment. Vision sensors, such as cameras or LiDAR, can be used for obstacle detection, object recognition, and mapping. By fusing data from all these sensors, robots can achieve more reliable and accurate localization in both outdoor and indoor environments.

Advances in GNSS Technology

The future of GPS technology is also promising, with the introduction of Next-Generation GNSS systems, such as Galileo (European Union), BeiDou (China), and QZSS (Japan), which are expected to provide more robust, precise, and reliable positioning data, even in challenging environments. These advancements, when coupled with IMUs and other sensors, will improve the robustness of autonomous robots.

Machine Learning for Sensor Fusion

As machine learning algorithms become more advanced, they are increasingly being applied to sensor fusion. Machine learning models can learn from real-world data to predict and correct sensor drift, improve GPS signal processing, and optimize localization algorithms for specific applications. This will significantly enhance the performance of robotic systems and allow them to adapt to dynamic environments in real-time.

Conclusion

GPS and IMUs are foundational to modern robotic navigation, providing essential data for location determination and motion sensing. While each sensor has its strengths and weaknesses, their integration through sensor fusion techniques, such as Kalman filtering, results in highly reliable and accurate positioning systems for autonomous robots. As technology advances, the combination of GPS, IMUs, and other sensors will enable robots to navigate more efficiently and safely, even in complex, GPS-denied environments.

The future of robotics lies in further refinement of sensor fusion techniques, the development of more advanced positioning systems, and the integration of machine learning and computer vision. These innovations will drive the continued evolution of autonomous systems, bringing them closer to achieving human-like capabilities in diverse real-world applications.