Introduction

In recent years, computer vision has made remarkable strides, becoming an essential tool in a wide range of industries, from autonomous vehicles and robotics to medical imaging and surveillance. At its core, computer vision seeks to enable machines to understand and interpret visual information from the world around them. However, challenges persist in providing accurate and robust scene understanding, particularly in complex and dynamic environments.

One powerful solution to these challenges is the technique of sensor fusion—integrating data from multiple sensors, such as cameras, LiDAR, radar, and infrared devices, to improve the quality and reliability of visual information. By combining information from different sources, sensor fusion enhances scene understanding, offering a more precise and robust perception of the environment. This approach is increasingly being used in autonomous systems and robotics, where accurate, real-time decision-making is critical.

In this article, we will explore how sensor fusion enhances computer vision, the technologies behind it, its applications in various industries, and the future potential of this powerful technique.

The Basics of Computer Vision

What is Computer Vision?

Computer vision is a field of artificial intelligence (AI) that enables machines to interpret and understand the visual world. Using image processing and machine learning techniques, computer vision systems analyze visual input, such as images or videos, to extract meaningful information. This can include detecting objects, recognizing faces, understanding scenes, tracking motion, and even interpreting gestures or actions.

At its most fundamental level, computer vision involves the following key steps:

- Image Acquisition: Capturing raw data from a visual sensor, such as a camera.

- Preprocessing: Enhancing the image quality by removing noise, adjusting brightness/contrast, or normalizing the data.

- Feature Extraction: Identifying key features in the image, such as edges, corners, or textures.

- Object Detection and Recognition: Identifying objects or patterns within the image, often using techniques like convolutional neural networks (CNNs).

- Scene Understanding: Interpreting the context of the image, including spatial relationships between objects and environmental factors.

Key Challenges in Computer Vision

While computer vision has made significant progress, there are several challenges that researchers and developers face:

- Occlusion: Objects in the scene may be partially or fully blocked by other objects, making them difficult to detect.

- Lighting Conditions: Variations in lighting, shadows, or reflections can affect the quality of visual input.

- Depth Perception: Understanding the three-dimensional structure of a scene from a two-dimensional image remains a difficult task.

- Scale and Resolution: Objects may appear at different scales or resolutions, complicating their recognition and tracking.

- Dynamic Environments: In real-world applications, scenes are constantly changing, requiring real-time adaptation to new conditions.

These challenges can be addressed to some extent with sensor fusion.

What is Sensor Fusion?

Sensor fusion refers to the process of combining data from multiple sensors to improve the accuracy and reliability of the information obtained from the environment. In the context of computer vision, sensor fusion involves integrating data from different types of cameras and sensors, such as RGB cameras, depth cameras (e.g., LiDAR or ToF cameras), radar, and infrared sensors, to create a more complete and accurate understanding of the scene.

Types of Sensors Used in Fusion

- RGB Cameras: Standard color cameras that capture visual information in the form of images or video. These are widely used in computer vision tasks such as object recognition, tracking, and scene understanding.

- Depth Sensors: These sensors, such as LiDAR (Light Detection and Ranging) and ToF (Time-of-Flight) cameras, capture information about the distance between the sensor and objects in the environment. This is crucial for creating 3D models of scenes and understanding the spatial arrangement of objects.

- Infrared Sensors: These sensors capture thermal data, often used for detecting heat signatures, which can be invaluable in low-light or night-time conditions.

- Radar: Radar sensors are used primarily for detecting objects and their movement through electromagnetic waves. They are particularly useful in situations where vision-based sensors may struggle, such as in fog, heavy rain, or other low-visibility conditions.

- IMUs (Inertial Measurement Units): These sensors track the movement of an object, helping to estimate its orientation and velocity. IMUs are often used in combination with other sensors to enhance motion tracking and navigation.

How Sensor Fusion Enhances Scene Understanding

By integrating information from multiple sensors, sensor fusion provides a more comprehensive view of the environment, improving the ability of computer vision systems to understand and interpret scenes. Here are several ways in which sensor fusion enhances scene understanding:

1. Improved Accuracy and Robustness

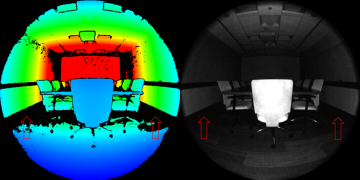

Different sensors have different strengths and weaknesses. For example, RGB cameras provide high-resolution color information but struggle in low light or with occlusions. LiDAR, on the other hand, excels at measuring distances and depth but lacks color or texture information. By combining data from multiple sensors, sensor fusion can compensate for the weaknesses of individual sensors, resulting in a more accurate and robust perception of the environment.

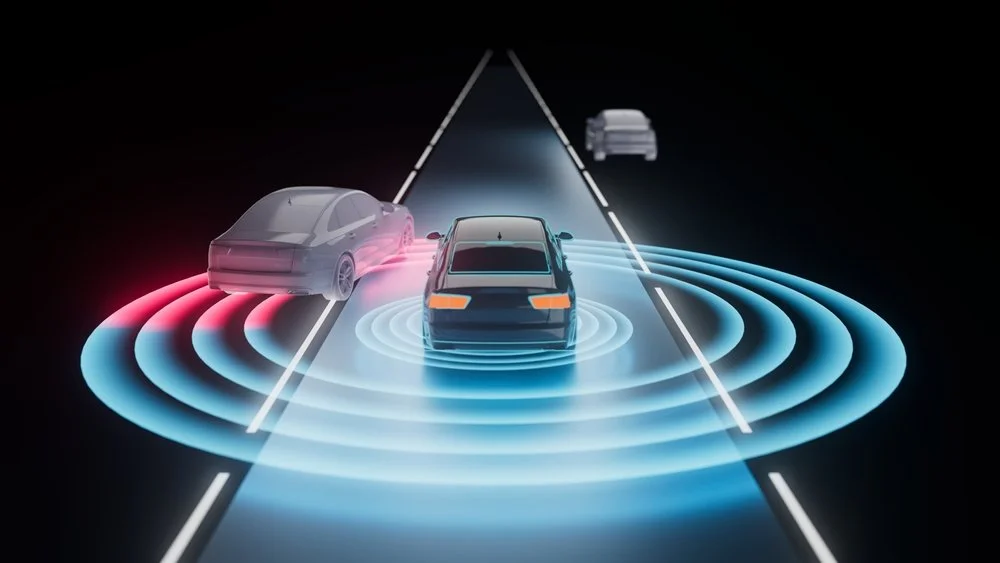

For instance, in autonomous vehicles, sensor fusion enables the integration of data from cameras, LiDAR, radar, and ultrasonic sensors to provide a more reliable understanding of the vehicle’s surroundings. Cameras detect traffic signs and road markings, LiDAR measures distances and creates 3D maps, and radar helps detect moving objects in various weather conditions.

2. Better Depth Perception and 3D Reconstruction

One of the major challenges in computer vision is understanding the depth of objects in a scene. While stereo cameras can provide some depth information by comparing two images, they may still struggle with accuracy, especially in challenging environments.

By combining data from LiDAR or depth cameras, which directly measure the distance to objects, computer vision systems can generate more accurate 3D reconstructions of the scene. This is particularly valuable in applications such as robot navigation, augmented reality (AR), and 3D modeling, where an accurate understanding of the environment’s spatial structure is essential.

3. Enhanced Object Detection and Recognition

Sensor fusion can also improve object detection and recognition by combining the complementary strengths of various sensors. For example, radar and infrared sensors can detect objects in low-light or foggy conditions where cameras may struggle. Meanwhile, RGB cameras provide rich texture and color information that can be used to identify objects more easily.

By fusing data from these sensors, computer vision systems can create more reliable and detailed object classifications. This is especially important in autonomous vehicles, drones, and industrial robots, where detecting and correctly identifying objects in real-time is critical for safe operation.

4. Improved Performance in Challenging Conditions

Real-world environments are rarely ideal. Factors such as low light, bad weather, occlusions, and dynamic scenes can all hinder the effectiveness of traditional computer vision systems. Sensor fusion can significantly improve performance in these conditions by leveraging the strengths of various sensors.

For example, infrared sensors are highly effective in low-light environments, allowing for object detection and tracking in the dark. Similarly, radar can operate effectively in adverse weather conditions, such as heavy rain or snow, where cameras and LiDAR may struggle to detect objects accurately.

5. Real-Time Scene Understanding

In applications such as autonomous driving or robotic navigation, real-time scene understanding is crucial. Sensor fusion can help achieve faster processing by combining data from multiple sensors in parallel, allowing robots and vehicles to make real-time decisions based on a more complete understanding of their environment.

By using techniques such as Kalman filters, particle filters, and Bayesian networks, sensor fusion can combine sensor data in a way that reduces uncertainty and improves the overall reliability of real-time decision-making systems.

Applications of Sensor Fusion in Computer Vision

The combination of sensor fusion and computer vision has many practical applications across various industries:

1. Autonomous Vehicles

In the field of autonomous vehicles, sensor fusion is used to combine data from LiDAR, cameras, radar, and GPS to create a detailed, real-time map of the environment. This integrated information helps the vehicle understand its surroundings, navigate safely, detect obstacles, and make driving decisions. The fusion of data from various sensors allows autonomous vehicles to function in diverse environments and conditions, from clear skies to foggy, low-light settings.

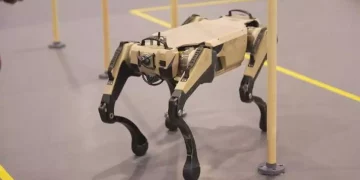

2. Robotics

In robotics, sensor fusion enables robots to navigate and interact with their environments autonomously. Robots equipped with depth sensors, cameras, and IMUs can detect objects, understand spatial arrangements, and adjust their movements accordingly. Sensor fusion improves the robot’s ability to adapt to new and changing environments, whether in industrial settings or during exploration missions.

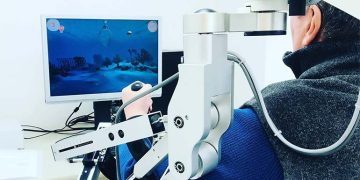

3. Healthcare and Medical Imaging

In the field of healthcare, sensor fusion is being used to enhance medical imaging techniques. By combining data from CT scans, MRI, and ultrasound devices, clinicians can gain a more comprehensive understanding of a patient’s condition. Sensor fusion also improves the performance of robotic surgery systems, where real-time, precise perception of the operating environment is critical.

4. Drones and Unmanned Aerial Vehicles (UAVs)

Drones and UAVs rely on sensor fusion to navigate and perform tasks such as aerial surveillance, delivery, and search-and-rescue. By combining data from cameras, LiDAR, GPS, and IMUs, drones can fly autonomously in complex environments, avoid obstacles, and map out areas in high detail.

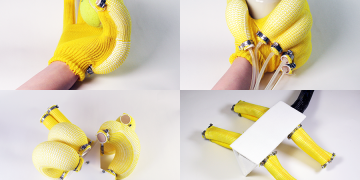

5. Augmented Reality (AR) and Virtual Reality (VR)

In augmented reality (AR) and virtual reality (VR), sensor fusion is used to combine real-world data with virtual overlays. By fusing information from cameras, gyroscopes, and accelerometers, AR and VR systems can track user movements and adjust the virtual environment in real-time. This creates a more immersive and responsive experience for users.

Conclusion

The integration of sensor fusion with computer vision is revolutionizing the way machines understand and interact with the world. By combining data from multiple sensors, such as cameras, LiDAR, radar, and infrared sensors, sensor fusion enables more accurate, robust, and reliable scene understanding. This has profound implications across various industries, including autonomous driving, robotics, healthcare, and augmented reality.

While challenges remain in processing and integrating large volumes of data in real-time, ongoing advancements in machine learning, computational power, and sensor technology are paving the way for smarter and more capable systems. As sensor fusion continues to evolve, it will play a pivotal role in making autonomous systems safer, more efficient, and better equipped to handle the complexities of the real world.