Introduction

The field of computer vision has revolutionized the way robots and machines perceive and interact with the world. By enabling machines to see, interpret, and understand their surroundings, computer vision is a critical enabler of advanced robotics. It provides the sensory input that robots need to make decisions, navigate environments, and perform complex tasks autonomously. Whether it’s a self-driving car navigating a busy street, a robot assembling intricate parts in a factory, or a surgical robot performing a delicate operation, computer vision is the underlying technology that allows these machines to “see” and “understand” the world around them.

In recent years, advancements in machine learning, particularly deep learning, have further enhanced the capabilities of computer vision systems. Today, robots equipped with computer vision are capable of not only recognizing objects but also understanding the context in which these objects exist, enabling a more sophisticated form of perception. This article explores the fundamental role of computer vision in robotics, particularly in autonomous driving, industrial automation, and medical surgery, highlighting the technology’s evolution, its applications, and the challenges that remain.

Understanding Computer Vision

Computer vision is a field of artificial intelligence (AI) that focuses on enabling machines to interpret and understand visual information from the world. This involves simulating the way humans process and analyze visual data. At the core of computer vision is the ability to extract meaningful information from images and videos, allowing robots to detect objects, track movement, recognize faces, interpret gestures, and more.

Core Components of Computer Vision

- Image Acquisition: The first step in computer vision involves capturing visual data, typically through cameras, LiDAR, or other imaging sensors. High-resolution cameras and multi-sensor systems are often used in robotics to provide rich, accurate input data.

- Image Processing: Once images are captured, they need to be processed to extract relevant features. This can include techniques such as filtering, edge detection, and contrast adjustment. Image processing prepares raw data for further analysis.

- Feature Extraction: The next step is to identify specific features in the image, such as edges, corners, textures, or patterns, which are essential for object detection and recognition.

- Object Recognition: Using machine learning algorithms, the robot identifies and classifies objects in its environment based on the extracted features. This may involve training the system on large datasets of labeled images to recognize various objects, such as cars, pedestrians, or surgical instruments.

- Context Understanding: Beyond recognizing objects, advanced computer vision systems can interpret the relationships between objects and understand the broader context. For example, in autonomous driving, the system must not only recognize a pedestrian but also understand their motion relative to the vehicle to predict potential hazards.

- Decision-Making: After interpreting the visual data, the system must make decisions based on its analysis. In autonomous systems, this involves using the information to navigate, manipulate objects, or perform tasks.

Computer Vision in Autonomous Driving

Autonomous driving is one of the most prominent applications of computer vision in robotics. Self-driving cars rely heavily on vision systems to understand and navigate their environment safely and efficiently. Computer vision enables autonomous vehicles to perceive objects, understand road conditions, recognize traffic signs, and make driving decisions.

Key Technologies in Autonomous Driving

- Cameras: High-definition cameras are the primary tool for visual perception in autonomous vehicles. These cameras capture images of the surrounding environment, which are then analyzed by computer vision algorithms to identify pedestrians, vehicles, traffic signs, and lane markings.

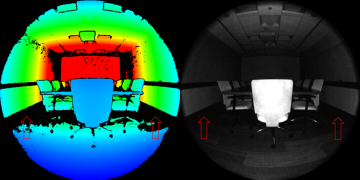

- LiDAR and Radar: While cameras provide visual information, LiDAR (Light Detection and Ranging) and radar systems are often used to measure the distance to objects and create detailed 3D maps of the environment. LiDAR, in particular, provides highly accurate depth information, which is critical for detecting obstacles and navigating complex environments.

- Sensor Fusion: Autonomous vehicles rely on data from multiple sensors—cameras, LiDAR, radar, and ultrasonic sensors. Sensor fusion combines the data from these different sources to create a more comprehensive understanding of the environment. This multi-sensor approach helps to compensate for the limitations of individual sensors and improve the overall robustness of the system.

Applications of Computer Vision in Autonomous Driving

- Object Detection and Classification: Computer vision algorithms allow self-driving cars to detect and classify objects in their environment. These include pedestrians, cyclists, other vehicles, road signs, and obstacles. The system must be able to distinguish between relevant objects (e.g., a pedestrian crossing the street) and less relevant ones (e.g., parked cars).

- Lane Detection and Tracking: To navigate roads safely, autonomous vehicles must continuously detect and track lane markings. Computer vision algorithms process images of the road to identify the boundaries of lanes, helping the vehicle stay on course and avoid veering off-track.

- Traffic Sign Recognition: Autonomous vehicles must recognize and respond to traffic signs, including speed limits, stop signs, and yield signs. Computer vision is used to identify these signs and interpret their meaning to make driving decisions accordingly.

- Pedestrian Detection and Avoidance: One of the most critical applications of computer vision in autonomous driving is pedestrian detection. Autonomous cars use vision systems to detect pedestrians in the road and predict their movement to avoid accidents.

- Navigation and Path Planning: Computer vision is also used for path planning in dynamic environments. For example, an autonomous vehicle must decide when to overtake another vehicle, navigate intersections, or adjust speed based on traffic conditions, all of which require real-time analysis of visual data.

Challenges in Autonomous Driving

Despite significant advancements, autonomous driving faces several challenges:

- Environmental Variability: Weather conditions, such as rain, fog, or snow, can interfere with camera and sensor performance, making it harder for the system to perceive objects accurately.

- Complex Traffic Scenarios: Traffic scenarios can be unpredictable, with pedestrians, cyclists, and other drivers often behaving in unexpected ways. Computer vision systems must be able to interpret a wide range of dynamic situations.

- Regulatory and Ethical Issues: There are also legal and ethical challenges surrounding the deployment of autonomous vehicles, particularly concerning safety standards, liability, and public trust in the technology.

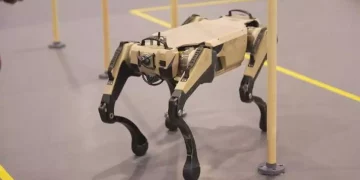

Computer Vision in Industrial Automation

The integration of computer vision in industrial automation has had a transformative effect on manufacturing and production processes. Robots equipped with computer vision can perform tasks such as quality control, object manipulation, and assembly with high precision and efficiency. This has led to increased productivity, reduced human error, and improved workplace safety.

Key Applications in Industrial Automation

- Quality Control and Inspection: One of the most common applications of computer vision in industrial automation is in quality control. Vision systems can be used to inspect products on the assembly line for defects, such as scratches, cracks, or incorrect parts. These systems are capable of detecting even microscopic defects, ensuring that only products that meet quality standards reach the consumer.

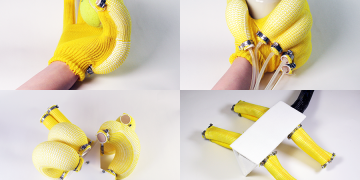

- Robotic Gripping and Manipulation: In automated factories, robots use computer vision to identify and pick up parts from a conveyor belt or assembly line. Vision systems provide the robot with the ability to locate objects, assess their orientation, and adjust its movements to grasp them accurately.

- Assembly Line Automation: Computer vision systems are also used to guide robots in performing assembly tasks, such as screwing in bolts, welding, or inserting components. By recognizing and tracking the parts and tools involved, robots can perform assembly tasks with high precision.

- Warehouse Automation: In warehouses, robots equipped with computer vision can navigate the environment, identify inventory, and pick and place items for storage or shipment. Vision systems are essential for autonomous mobile robots (AMRs) used in logistics and distribution centers.

- Predictive Maintenance: Computer vision can also be used to monitor the condition of equipment and machinery in industrial settings. By analyzing images of machinery, the system can detect signs of wear and tear, enabling predictive maintenance and reducing downtime.

Challenges in Industrial Automation

While the use of computer vision in industrial automation has brought significant benefits, there are still challenges to overcome:

- Lighting and Environmental Conditions: In industrial environments, lighting conditions can vary widely, and shadows, reflections, and dust can interfere with image quality. Robust computer vision algorithms must be able to handle such variations effectively.

- Real-time Processing: Industrial automation systems often require real-time processing of visual data to make immediate decisions. This demands powerful computing resources and efficient algorithms.

- Object Occlusion: In complex environments, objects may be partially occluded or in motion, making it difficult for vision systems to detect and track them accurately.

Computer Vision in Medical Surgery

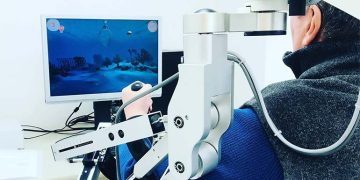

In the field of medicine, computer vision is transforming how surgeries are performed, providing enhanced precision, real-time guidance, and improved patient outcomes. Surgical robots and computer-assisted systems rely on computer vision to visualize the patient’s anatomy, identify critical structures, and assist surgeons in performing complex procedures.

Key Applications in Medical Surgery

- Robotic-Assisted Surgery: Surgical robots, such as the da Vinci Surgical System, use computer vision to provide real-time, high-definition, 3D images of the surgical site. Surgeons can manipulate these images to perform minimally invasive procedures with greater accuracy.

- Tumor Detection and Diagnosis: Computer vision is used in imaging systems to detect tumors in medical scans such as CT scans, MRIs, and X-rays. By analyzing the images, AI systems can assist doctors in identifying potential areas of concern and providing more accurate diagnoses.

- Navigation and Guidance: In minimally invasive surgery, computer vision can provide navigation and guidance, allowing surgeons to operate with higher precision. For example, vision systems can track surgical instruments and ensure they are used in the correct position relative to the anatomy.

- Augmented Reality (AR) for Surgery: AR technologies combined with computer vision allow surgeons to overlay important information, such as 3D models of organs or blood vessels, onto the patient’s body during surgery. This provides enhanced situational awareness and helps guide the surgeon’s movements.

- Post-Operative Monitoring: Computer vision can also be used in post-operative care to monitor the recovery of patients. Vision systems can assess wound healing, detect infections, or track the movement of patients after surgery.

Challenges in Medical Surgery

Despite the advancements, computer vision in surgery still faces challenges:

- Real-time Accuracy: Surgical procedures require real-time feedback and highly accurate vision systems. Even small errors in perception can lead to serious complications.

- Image Quality and Resolution: High-quality imaging is critical in surgery, but factors such as tissue motion, blood, or light reflection can degrade image clarity.

- Regulatory and Ethical Issues: The integration of AI and computer vision into surgery raises questions regarding accountability, patient consent, and the ethical use of technology in healthcare.

Conclusion

Computer vision is the cornerstone of robotic perception, enabling robots to understand and interact with the world in ways that were once thought impossible. In autonomous driving, industrial automation, and medical surgery, computer vision is enhancing efficiency, safety, and precision. While significant progress has been made, challenges such as real-time processing, environmental variability, and system integration remain. As technology continues to evolve, the potential applications of computer vision in robotics will only expand, shaping the future of industries and improving the quality of human life.

In the coming years, as computer vision continues to advance, we can expect even more sophisticated and capable robotic systems that can see, understand, and respond to the world around them with greater accuracy and intelligence. The future of robotics is intrinsically linked to the continued development of computer vision, and its impact will be felt across a wide range of industries, from healthcare to manufacturing to transportation.