Introduction

The ability for machines to understand, recognize, and respond to human emotions has long been a subject of fascination in the fields of artificial intelligence (AI), robotics, and human-computer interaction (HCI). While traditional computing systems focus primarily on processing data and executing tasks, modern AI technologies are rapidly evolving to enable machines to interact with humans in more emotionally intelligent ways. The concept of affective computing—the development of systems that can sense, interpret, and respond to human emotions—has the potential to radically transform how we engage with machines in both personal and professional contexts.

From virtual assistants and healthcare robots to autonomous vehicles and customer service agents, the ability of machines to understand and respond to human emotions is becoming increasingly critical. Machines that can identify emotional cues—whether through voice tone, facial expressions, or physiological signals—can provide more personalized, empathetic interactions, fostering stronger human-machine relationships. However, this goal raises several technical, ethical, and psychological questions: How do we teach machines to recognize emotions accurately? Can machines truly feel emotions, or are they merely simulating empathy? And what are the implications of machines with emotional intelligence?

This article explores the research and advancements in affective computing and emotion recognition, examining how machines understand and interpret human emotions, the technologies behind this process, and the potential applications and challenges of emotionally intelligent systems.

The Science of Emotion and Machine Interaction

Understanding Human Emotions

To develop systems that can respond to human emotions, it is essential to first understand what emotions are and how they are expressed. Emotions are complex psychological and physiological responses to stimuli, often involving subjective feelings, physical reactions, and behavioral changes. Psychologists have identified several basic emotions that are universally recognized across cultures, including happiness, sadness, fear, anger, surprise, and disgust.

Human emotions can be communicated in many ways, including:

- Facial Expressions: Facial expressions are one of the most immediate and obvious forms of emotional expression. Research by Paul Ekman identified six primary emotions (happiness, sadness, anger, surprise, fear, and disgust) that are universally expressed through distinct facial movements.

- Voice Tone and Speech Patterns: The way we speak—our tone, pitch, and pace—can convey a wide range of emotions. For instance, a high-pitched, fast-paced voice may indicate excitement, while a slow, deep tone may convey sadness or seriousness.

- Body Language and Gestures: Posture, hand movements, and other bodily gestures can indicate emotional states. A person’s stance, for example, may reflect their level of confidence or nervousness.

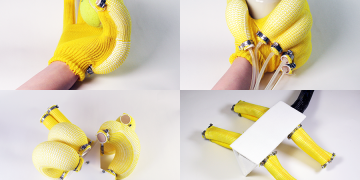

- Physiological Responses: Emotions are often accompanied by physiological changes, such as heart rate, sweating, or changes in breathing patterns. These physiological signals can be measured using various sensors, such as heart rate monitors, galvanic skin response (GSR) sensors, and eye-tracking devices.

In order to replicate these human emotional expressions in machines, AI systems must be able to interpret these cues and respond appropriately. Understanding the multifaceted nature of human emotion is key to building machines that can engage in meaningful, emotionally aware interactions.

Emotion Recognition Technologies

1. Computer Vision for Facial Expression Recognition

One of the most widely studied methods for emotion recognition is facial expression analysis. As mentioned earlier, facial expressions are universal indicators of emotional states. Computer vision techniques, powered by deep learning algorithms, have made significant strides in recognizing and interpreting these expressions.

- Facial Action Coding System (FACS): Developed by Paul Ekman and Wallace V. Friesen, FACS is a comprehensive system for categorizing facial movements. It breaks down facial expressions into “action units” (AUs), which correspond to movements of individual facial muscles. Modern AI systems use this framework to analyze facial expressions and identify emotions.

- Deep Learning and Convolutional Neural Networks (CNNs): CNNs, a type of deep learning algorithm, are highly effective at detecting facial expressions. By training on large datasets of labeled facial images, CNNs can learn to recognize patterns in facial movements that correspond to different emotions.

- Real-Time Emotion Detection: AI-driven emotion recognition systems, such as Affectiva and Realeyes, are capable of processing facial expressions in real-time. These systems are commonly used in marketing, healthcare, and customer service applications to gauge emotional responses and improve user engagement.

2. Voice-Based Emotion Recognition

In addition to facial expressions, machines can recognize emotions through the tone, pitch, and cadence of speech. Voice-based emotion recognition analyzes vocal characteristics such as:

- Pitch: Higher pitches often correspond to emotions like excitement or fear, while lower pitches may indicate sadness or anger.

- Speech Rate: Fast speech is often associated with excitement or anxiety, while slower speech may indicate sadness or boredom.

- Volume: Shouting or loud speech might indicate anger, while soft speech can signify fear or sadness.

- Prosody: The rhythm, stress, and intonation of speech also carry emotional meaning. For example, a rising intonation may indicate a question or curiosity, while a flat tone can imply disinterest or monotony.

Techniques used in voice-based emotion recognition include:

- Speech Emotion Recognition (SER): Using algorithms such as hidden Markov models (HMMs) or recurrent neural networks (RNNs), SER systems can detect emotional states by analyzing various features of speech.

- Deep Learning: Advanced neural networks, such as long short-term memory (LSTM) networks, are increasingly used to model sequential speech data and recognize emotions based on the contextual nuances of speech.

Voice-based emotion recognition has applications in virtual assistants (e.g., Amazon Alexa, Apple Siri), call centers, and therapeutic robots, enabling machines to detect frustration, joy, or sadness in users and respond in an empathetic manner.

3. Physiological and Biofeedback Systems

Physiological signals are powerful indicators of emotional states. Advances in biofeedback technologies enable machines to monitor physical responses that are closely tied to emotions. These systems use sensors to measure:

- Heart Rate Variability (HRV): Changes in heart rate are often linked to emotional arousal. For example, an increased heart rate may indicate excitement or fear, while a decreased rate may signal relaxation or sadness.

- Galvanic Skin Response (GSR): GSR measures the electrical conductivity of the skin, which increases when a person is stressed, anxious, or excited.

- Electroencephalography (EEG): EEG measures brainwave patterns that are associated with different emotional states, such as relaxation, focus, or stress.

Biofeedback systems are commonly used in therapeutic applications, such as in biofeedback therapy for stress management or in wearable devices that help monitor emotional well-being.

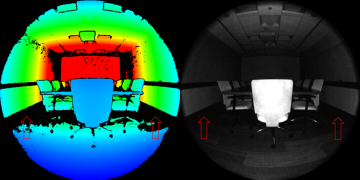

4. Multimodal Emotion Recognition

To improve the accuracy and depth of emotional understanding, many modern systems integrate multiple types of data—facial expressions, voice tone, physiological signals, and even body language—into a single emotion recognition model. This multimodal approach allows machines to gain a more comprehensive understanding of human emotions and respond more effectively.

For example, a healthcare robot working with a patient might use voice recognition to detect stress in the patient’s speech while simultaneously analyzing facial expressions and physiological signals to assess their emotional state more accurately. By combining data from multiple sources, the system can make more nuanced decisions and deliver more personalized responses.

Applications of Emotion Recognition Technology

1. Virtual Assistants and Customer Service

AI-powered virtual assistants, such as Apple’s Siri, Amazon’s Alexa, and Google Assistant, have become ubiquitous in our daily lives. These assistants are increasingly being designed to detect and respond to the emotional states of users, improving the quality of interactions. For example, a virtual assistant might detect frustration in a user’s voice and respond with more empathy, or it might adjust its tone to suit a user’s mood, enhancing the user experience.

In customer service, emotion recognition technology can be used to assess the emotional state of callers in real-time. This allows call centers to prioritize calls from distressed or upset customers, providing a more compassionate and tailored service experience.

2. Healthcare and Therapy

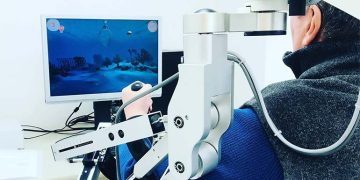

Emotion recognition is particularly valuable in healthcare, especially in the realm of mental health and therapy. Robots and AI systems equipped with emotion recognition technology can help diagnose and monitor mental health conditions such as depression, anxiety, and PTSD. By analyzing a patient’s emotional state over time, healthcare professionals can receive insights into the patient’s progress and adjust their treatment plans accordingly.

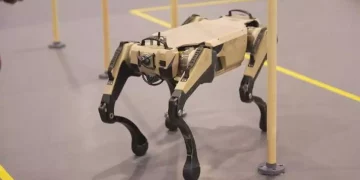

- Therapeutic Robots: Robots like Pepper or Paro, a therapeutic robot used for elderly care, are designed to recognize emotions and engage with users in a compassionate way. These robots can provide emotional support, monitor mental well-being, and assist in therapeutic exercises.

- Virtual Therapists: AI-driven virtual therapists, such as Woebot, use emotion recognition to interact with patients in a more human-like way, offering support for mental health issues and providing cognitive behavioral therapy (CBT) exercises tailored to a person’s emotional state.

3. Marketing and Advertising

In marketing, understanding consumer emotions can significantly enhance the effectiveness of campaigns. Emotion recognition technology can analyze how people react to advertisements, product displays, or store environments. Marketers can use this data to fine-tune their campaigns and create more emotionally resonant content that drives engagement and sales.

- Emotion Analytics: Companies like Affectiva and Realeyes use facial expression and voice analysis to measure emotional reactions to advertisements and media content, providing valuable insights into consumer sentiment.

4. Autonomous Vehicles and Robotics

In autonomous vehicles, emotion recognition can be used to improve safety and enhance the driving experience. For example, a self-driving car could detect a passenger’s stress or anxiety and take actions to calm the person, such as adjusting the music or temperature. Similarly, robots in healthcare, customer service, or hospitality can use emotion recognition to gauge the mood of users and adapt their behavior accordingly.

Challenges in Emotion Recognition

While the potential for emotion recognition in machines is vast, there are several challenges that researchers and developers face:

- Cultural and Individual Differences: Emotional expression varies widely across cultures, and individuals may express emotions differently. Developing systems that can account for these variations is crucial for accuracy.

- Privacy and Ethical Concerns: The collection of emotional data raises privacy concerns. Users must be informed of how their emotional data is being used, and consent should be obtained before collecting such data.

- Context and Ambiguity: Emotions are context-dependent, and the same facial expression or tone of voice may have different meanings depending on the situation. Machines must be able to understand context to accurately interpret emotions.

- Machine Simulation vs. True Emotion: While machines can simulate emotional responses, the question remains whether they can truly feel emotions. This philosophical dilemma has implications for how we interact with machines and what we expect from them in emotionally sensitive contexts.

Conclusion

The research into how machines understand, recognize, and respond to human emotions is at the forefront of AI and robotics development. Emotion recognition technology has the potential to revolutionize industries ranging from healthcare and customer service to marketing and entertainment. While significant progress has been made in creating emotionally intelligent machines, challenges such as cultural sensitivity, privacy, and contextual understanding remain.

As AI and robotics continue to advance, the integration of emotional intelligence into machines will pave the way for more empathetic, human-like interactions. The future of emotionally aware machines promises to enhance user experiences, improve mental health care, and create new possibilities for human-robot collaboration, making our interactions with technology more intuitive, compassionate, and effective.