Introduction

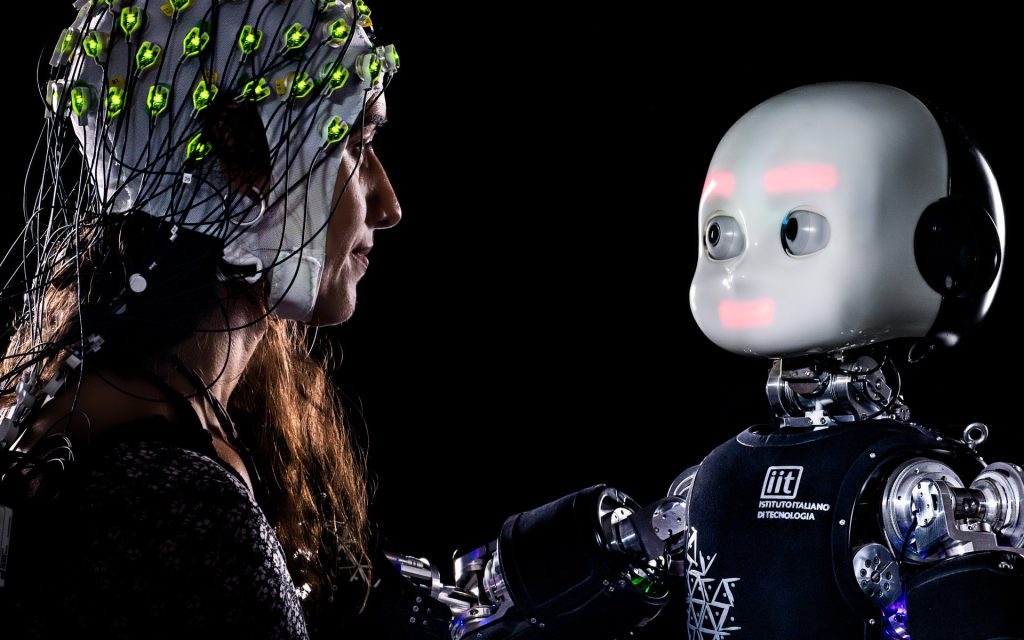

The ability of machines to understand, interpret, and respond to human emotions represents one of the most profound shifts in the relationship between humans and technology. Traditionally, machines and computers were seen as logical, unemotional tools designed to execute tasks with precision and efficiency. However, the evolution of affective computing has introduced a new paradigm: machines that can recognize, interpret, and simulate human emotions, creating a more natural and intuitive interaction between humans and technology.

This shift is particularly important in the context of human-machine emotional interaction. As robots, AI systems, and smart devices become increasingly embedded in our daily lives, they need to respond to the emotional needs and psychological states of their users. The development of systems with emotional intelligence allows for richer, more adaptive interactions, which could significantly impact industries such as healthcare, customer service, entertainment, and education.

In this article, we will explore the field of affective computing, its underlying technologies, its applications in human-machine emotional interaction, and the challenges and ethical considerations that come with creating emotionally aware machines. The ultimate goal is to understand how emotional AI can enhance our interactions with machines and reshape the way we live and work.

What is Affective Computing?

Affective computing refers to the development of systems and devices that can recognize, interpret, and respond to human emotions. It is an interdisciplinary field that combines elements of computer science, psychology, neuroscience, and cognitive science. The core objective of affective computing is to enable machines to detect emotional signals and act in ways that are emotionally intelligent, thereby improving the quality of human-computer interaction.

Unlike traditional computing, which primarily focuses on logic and performance, affective computing seeks to add a layer of empathy to machine behavior. The technology is capable of processing a wide range of emotional signals, from facial expressions and body language to voice tones and physiological indicators such as heart rate and skin conductivity.

Components of Affective Computing:

- Emotion Recognition: This involves using sensors, algorithms, and AI to detect emotions in human beings. It could involve facial expression analysis, speech emotion recognition, and even physiological data monitoring.

- Emotion Simulation: Affective computing also involves systems that can simulate emotions, allowing machines to interact with humans in an emotionally engaging manner.

- Emotion Synthesis and Response: Once emotions are recognized, the system can respond in an appropriate manner, adjusting its actions, speech, or behavior based on the detected emotional state of the user.

Affective computing has applications in everything from virtual assistants and customer service chatbots to therapeutic robots and self-driving cars.

Technologies Behind Affective Computing

Several technologies power affective computing systems, including computer vision, speech recognition, natural language processing (NLP), and machine learning. These technologies allow machines to analyze human emotional data and respond intelligently.

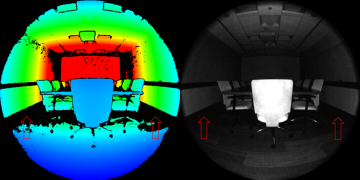

1. Emotion Recognition via Computer Vision

One of the most well-known methods of emotion recognition is through facial expression analysis. Facial expressions are universal indicators of emotions, with different expressions corresponding to specific feelings such as happiness, sadness, anger, or surprise.

- Facial Action Coding System (FACS): FACS is a comprehensive tool used to categorize facial expressions into different muscle movements called action units (AUs). By detecting these AUs, AI systems can decode emotions.

- Deep Learning for Facial Expression Recognition: Machine learning algorithms, particularly convolutional neural networks (CNNs), are trained on large datasets of human faces to recognize subtle facial cues that indicate emotions. These systems can analyze the facial expressions of users in real-time to infer their emotional state.

2. Speech Emotion Recognition (SER)

Emotion recognition through speech involves analyzing prosody, which is the rhythm, pitch, tone, and loudness of speech. Changes in these factors often correspond with specific emotional states.

- Voice Stress Analysis: Affective computing systems use voice recognition to detect stress, happiness, sadness, or anger in a speaker’s voice. These systems analyze the changes in vocal tone, pitch, and tempo, which can be powerful emotional indicators.

- Natural Language Processing (NLP): NLP allows machines to process and understand human language. By analyzing the sentiment and emotional content of a person’s speech or text, machines can gauge the emotional tone behind the words, adjusting their response accordingly.

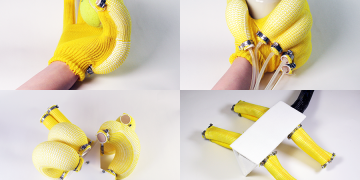

3. Biometric Monitoring and Physiological Data

Another critical aspect of affective computing is the collection and interpretation of biometric data. This includes measuring physiological signals like heart rate, skin conductivity, body temperature, and even brainwave activity.

- Wearable Sensors: Wearable devices like smartwatches and fitness trackers can monitor physiological changes that may indicate emotional shifts, such as increased heart rate during stress or relaxation during calm states.

- Galvanic Skin Response (GSR): GSR is a method used to measure changes in the electrical conductivity of the skin, which varies with sweating. It is a sensitive indicator of emotional arousal and is often used to assess a person’s emotional response to stimuli.

4. Machine Learning and Data Analysis

To make sense of all the data collected, machine learning algorithms are employed to analyze and predict human emotions. These systems learn from vast amounts of emotional data to detect patterns and make decisions based on previous experiences.

- Supervised Learning: In supervised learning, the algorithm is trained on labeled datasets that include emotional annotations. For example, the algorithm might be trained on speech samples labeled as happy, sad, angry, etc. The machine learns to identify emotional patterns in the data.

- Unsupervised Learning: In unsupervised learning, the system finds patterns in data without predefined labels. This is particularly useful for identifying new or subtle emotional states that may not be immediately apparent.

Applications of Affective Computing in Human-Machine Emotional Interaction

The ability for machines to recognize and respond to emotions opens up new possibilities for more intuitive and natural human-machine interactions. Below are some of the key areas where affective computing is having a significant impact.

1. Customer Service and Support

In the customer service industry, chatbots and virtual assistants powered by affective computing are transforming how businesses interact with customers.

- Emotionally Aware Chatbots: By integrating emotion recognition, chatbots can gauge the mood of a customer from their text or voice input. For example, a frustrated customer could be identified by their choice of words and tone, prompting the chatbot to respond in a more empathetic and helpful manner.

- Improved Customer Experience: Emotionally intelligent systems can adjust their responses based on a customer’s emotional state, improving customer satisfaction and loyalty. For instance, an emotionally aware assistant can recognize signs of frustration in a customer and escalate the issue to a human representative more quickly.

2. Healthcare and Therapy

In healthcare, affective computing holds immense promise for improving patient care and supporting mental health. Robots and AI systems that can recognize emotions could offer more personalized and compassionate care, particularly for vulnerable populations.

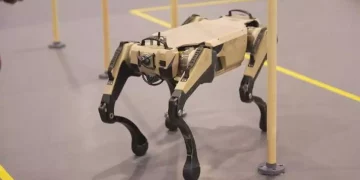

- Robotic Caregivers: Social robots equipped with affective computing capabilities are already being used in eldercare, where they provide companionship and assist with daily activities. These robots can detect changes in the emotional state of elderly patients and respond accordingly, offering comfort or notifying caregivers when necessary.

- Mental Health Support: AI-driven therapeutic tools, such as virtual therapists, can analyze speech patterns and emotions to diagnose and treat mental health conditions. By recognizing signs of depression, anxiety, or stress, these systems can provide tailored interventions, such as guided meditation or cognitive behavioral therapy (CBT) exercises.

3. Education and Learning

Affective computing also plays an important role in education, particularly in providing emotional support for students and enhancing learning environments.

- Emotionally Intelligent Tutors: AI-powered tutors that can recognize and respond to student emotions could significantly enhance personalized learning. For example, if a student is frustrated or bored, the system could adjust the level of difficulty or offer encouraging feedback.

- Adaptive Learning Environments: Educational robots and AI systems can adjust their teaching strategies based on the emotional and cognitive responses of students. By understanding a student’s emotional state, these systems can optimize their teaching methods for better engagement and learning outcomes.

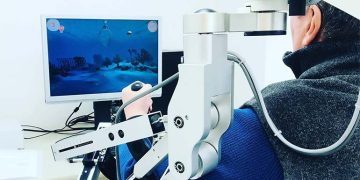

4. Entertainment and Gaming

The gaming industry has also begun to explore affective computing for creating more immersive and emotionally engaging experiences.

- Emotionally Aware Video Games: Future video games may incorporate affective computing to respond dynamically to players’ emotions. For instance, a game could adjust its difficulty level based on the player’s frustration or offer in-game support when it detects that the player is feeling stressed or anxious.

- Interactive Storytelling: Affective computing can be used to create interactive narratives where characters in movies or video games respond to the player’s emotional reactions. This could lead to more personalized and engaging entertainment experiences.

Ethical Considerations and Challenges

While the development of emotionally intelligent machines offers many benefits, it also raises important ethical and societal concerns.

1. Privacy and Data Security

The collection of emotional data, particularly through biometric monitoring and facial expression analysis, raises serious privacy concerns. There are risks that sensitive emotional information could be exploited, leading to potential misuse or unauthorized access.

- Informed Consent: It is critical that users are informed about the data being collected and how it will be used. Transparent privacy policies and robust data protection measures must be implemented to safeguard users’ emotional data.

2. Emotional Manipulation

There is the potential for emotionally intelligent systems to be used manipulatively, especially in marketing and advertising. Machines that can read and influence human emotions could be used to push products or services in a way that takes advantage of a person’s emotional vulnerabilities.

- Ethical AI Design: To prevent manipulation, ethical guidelines must be established for the design of emotionally intelligent machines. These guidelines should ensure that emotional data is used responsibly and that users’ emotional well-being is prioritized.

3. Dependence on Machines

As machines become more emotionally intelligent, there is a risk that people may become overly reliant on these systems for emotional support. This could lead to a decline in real-world social interactions and undermine the development of emotional intelligence in humans.

- Balanced Integration: While emotionally aware machines can provide valuable support, it is essential that they are integrated in a way that complements human interaction rather than replacing it.

Conclusion

Affective computing is poised to transform the way humans interact with machines, creating more intuitive, empathetic, and personalized experiences across various industries. By enabling machines to understand and respond to emotions, we can improve everything from customer service to mental health care, education, and entertainment.

However, the rise of emotionally intelligent machines also presents significant ethical challenges, from privacy concerns to the potential for emotional manipulation. As we move forward, it will be essential to strike a balance between leveraging the benefits of affective computing and ensuring that these technologies are used responsibly, ethically, and transparently.

The future of human-machine emotional interaction is one of collaboration, where AI and robots can enrich our lives, support our well-being, and help us navigate the complexities of the human experience.