Introduction

The advent of 3D vision reconstruction and depth estimation has revolutionized the fields of computer vision, robotics, and augmented reality (AR). By reconstructing three-dimensional (3D) scenes from 2D images and estimating the depth or distance between objects and the camera, these technologies enable machines to perceive the world in a way that closely mimics human visual perception. This ability is fundamental for applications such as autonomous vehicles, robotic navigation, augmented reality (AR), virtual reality (VR), and 3D modeling.

This article explores the fundamentals of 3D vision reconstruction and depth estimation, discussing the key techniques, algorithms, and tools that are used to enable machines to generate 3D representations of environments. We will delve into the challenges faced in this field, including dealing with noise, occlusion, and computational complexity, while also highlighting the latest advancements and real-world applications of 3D vision systems.

The Importance of 3D Vision Reconstruction and Depth Estimation

What is 3D Vision Reconstruction?

3D vision reconstruction refers to the process of creating a three-dimensional representation of a scene or object based on two-dimensional images or video feeds. It is an essential component in computer vision, particularly when depth perception and understanding of spatial relationships are required. In contrast to traditional 2D images, which only represent surfaces, 3D reconstructions provide more comprehensive and accurate models of real-world environments, which can be manipulated or analyzed for a variety of purposes.

The primary goal of 3D vision reconstruction is to generate an accurate, detailed, and geometrically correct model of a scene, often represented as a point cloud, mesh, or voxel grid.

What is Depth Estimation?

Depth estimation is the process of determining the distance between objects in a scene and the camera or observer. It is a fundamental task in computer vision, as it allows for the creation of 3D models and the understanding of how far objects are from the viewer. Depth estimation provides information about the 3D structure of a scene from 2D images or video streams, making it possible for machines to navigate, understand spatial relationships, and interact with the environment.

In simple terms, depth estimation answers the question: “How far is each object in the scene from the camera?”

Applications of 3D Vision Reconstruction and Depth Estimation

The applications of 3D vision reconstruction and depth estimation are vast and cover many fields:

- Autonomous Vehicles: Self-driving cars use depth estimation to understand the distance to nearby objects, such as other vehicles, pedestrians, and obstacles, ensuring safe navigation.

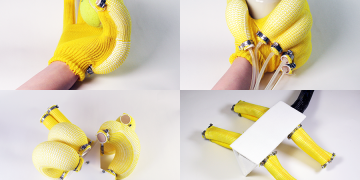

- Robotics and Manipulation: Robots use 3D vision to understand their surroundings, allowing them to interact with objects or perform tasks such as picking and placing items.

- Augmented Reality (AR) and Virtual Reality (VR): Depth estimation is used to overlay virtual objects in the real world or to create immersive virtual environments that respond to the user’s movements.

- 3D Scanning and Modeling: 3D vision is used in creating digital models of real-world objects for design, simulation, and entertainment, such as in gaming, architecture, and heritage preservation.

- Medical Imaging: Depth sensing and 3D vision are utilized in medical imaging systems for tasks such as diagnostics, surgical planning, and minimally invasive surgery.

Key Techniques in 3D Vision Reconstruction and Depth Estimation

Several techniques are employed in the process of depth estimation and 3D vision reconstruction. The most common ones include stereo vision, structure from motion (SfM), multi-view stereo (MVS), depth from defocus, and time-of-flight (ToF).

1. Stereo Vision

Stereo vision uses two or more cameras placed at different angles to capture two or more images of the same scene. By comparing the two images and finding corresponding points, stereo vision systems can compute the depth by triangulating the differences between the two views.

- Fundamentals of Stereo Vision: The main idea is to capture two images from slightly different perspectives and find the disparities between corresponding points. The disparity, or difference in position, allows for the calculation of depth based on geometric principles.

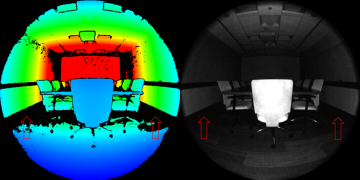

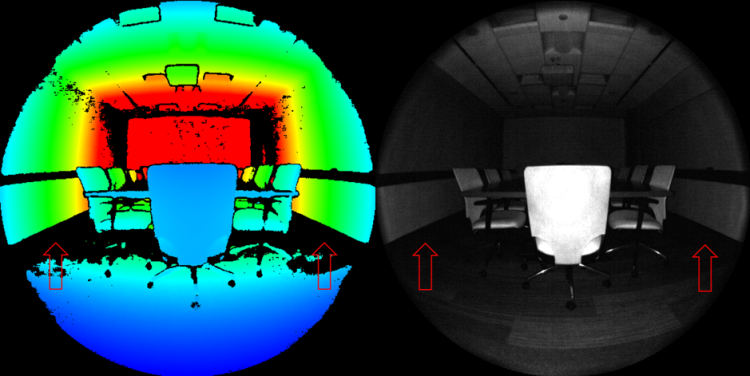

- Disparity Map: The result of stereo vision processing is often a disparity map, where each pixel contains information about the depth or distance to the corresponding object in the scene. A depth map can be generated from the disparity map by applying the camera calibration parameters.

- Challenges: Stereo vision relies on finding accurate correspondences between pixels in different images, which can be challenging in the presence of occlusions, repetitive textures, or low-contrast regions.

2. Structure from Motion (SfM)

Structure from Motion (SfM) is a technique that reconstructs 3D scenes from a series of 2D images taken from different viewpoints. SfM involves both camera motion estimation and 3D point cloud reconstruction.

- How SfM Works: The technique involves identifying feature points in the images, matching them across different views, and then estimating the camera’s position and the 3D location of the points based on the observed motion. The result is a sparse 3D reconstruction, which can be further refined by adding more images or using multi-view techniques.

- Applications: SfM is widely used in photogrammetry, 3D scanning, and geospatial modeling. It is especially useful when camera movement is available but stereo images are not.

- Challenges: SfM is computationally intensive and requires high-quality image feature matching. It also struggles with occlusions and non-rigid objects, where the scene is changing over time.

3. Multi-View Stereo (MVS)

Multi-view stereo (MVS) extends stereo vision by using more than two images to capture a scene from different angles. This technique improves the quality and detail of the 3D reconstruction by increasing the number of observations.

- How MVS Works: In MVS, the additional views help to reduce ambiguity in depth estimation and fill in missing information that may be occluded in stereo vision. The more views there are, the more accurate the 3D reconstruction becomes.

- Applications: MVS is used in 3D scanning, digital heritage preservation, and photorealistic rendering. It is particularly effective for generating detailed reconstructions of complex scenes or objects.

- Challenges: MVS requires accurate camera calibration and is computationally expensive. It also requires significant processing power to match features across many images.

4. Depth from Defocus

Depth from defocus (DFD) is a technique used to estimate depth by analyzing the blur (defocus) in the images taken at different focal lengths. The amount of defocus is related to the distance from the camera.

- How DFD Works: By capturing images with different focus settings, depth information can be inferred based on the amount of blur. This method is particularly useful in situations where stereo cameras or motion are unavailable.

- Applications: DFD is used in cameras with adjustable focal lengths, such as smartphones or cameras with variable lenses. It has applications in areas such as object tracking and camera autofocus systems.

- Challenges: DFD requires precise control over the focus settings, and it may not be suitable for scenes with significant motion or low contrast.

5. Time-of-Flight (ToF) Cameras

Time-of-flight (ToF) cameras use the time it takes for light to travel to an object and back to the sensor to measure depth. This technology provides direct depth measurements, making it highly accurate for real-time applications.

- How ToF Works: A ToF camera emits light pulses, usually in the infrared spectrum, and measures the time it takes for the light to return after hitting an object. The time delay is used to calculate the distance to the object.

- Applications: ToF cameras are commonly used in applications such as 3D scanning, gesture recognition, autonomous vehicles, and robotic navigation.

- Challenges: ToF cameras can be sensitive to lighting conditions, and they may not perform well in high-ambient light environments. Additionally, they have limited range and resolution compared to other techniques like stereo vision.

Challenges in 3D Vision Reconstruction and Depth Estimation

While the techniques discussed above have made significant advances, there are still several challenges to overcome:

1. Occlusion Handling

Occlusion occurs when one object blocks the view of another. This can lead to missing data in depth estimation, especially in stereo vision and multi-view stereo, where objects are partially obscured in one or more views.

- Solutions: Techniques such as multi-view reconstruction or depth completion using machine learning can help mitigate the effects of occlusion by inferring missing depth information.

2. Computational Complexity

3D reconstruction and depth estimation are computationally expensive, especially when processing high-resolution images or large datasets. Real-time processing is essential for many applications like robotics and AR, but achieving this requires efficient algorithms and hardware optimization.

- Solutions: GPU acceleration, parallel processing, and machine learning-based approaches are being employed to speed up computation and reduce resource consumption.

3. Noise and Calibration Errors

Noise in sensor data, along with errors in camera calibration, can degrade the accuracy of 3D reconstruction and depth estimation. Calibration errors, such as misalignment between the camera and sensor, can introduce systematic errors in the depth map.

- Solutions: Robust algorithms, sensor fusion techniques, and careful calibration procedures are required to improve accuracy and reduce noise.

Applications in the Real World

The ability to reconstruct 3D environments and estimate depth has a wide range of real-world applications:

1. Autonomous Vehicles

Autonomous vehicles use 3D vision systems to understand their surroundings and make decisions about navigation. By estimating the depth of objects around the vehicle, these systems enable safe driving by detecting obstacles, pedestrians, and other vehicles in real-time.

2. Robotics

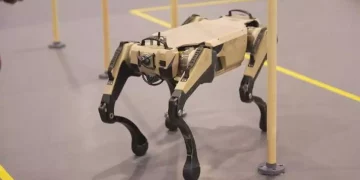

Robots use depth estimation for tasks such as navigation, object manipulation, and path planning. 3D vision enables robots to understand and interact with their environment, making them more autonomous and efficient.

3. Medical Imaging

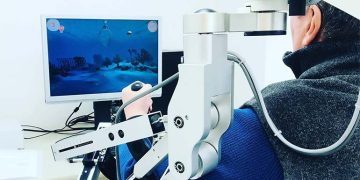

Depth estimation and 3D reconstruction techniques are widely used in medical imaging, including applications such as 3D ultrasound, MRI scanning, and surgical planning. These techniques help doctors visualize internal organs and tissues in three dimensions, improving diagnosis and treatment planning.

Conclusion

3D vision reconstruction and depth estimation are critical technologies in modern computer vision, enabling machines to understand the world in three dimensions. These technologies have broad applications across industries such as autonomous driving, robotics, healthcare, and entertainment. Despite significant progress, challenges such as occlusion handling, computational complexity, and noise reduction still need to be addressed.

Advancements in algorithms, sensors, and machine learning techniques are likely to further improve the accuracy, speed, and scalability of 3D vision systems, opening up new possibilities for immersive experiences, intelligent machines, and smarter systems across the globe. As these technologies continue to evolve, the ability to perceive and interact with the world in 3D will become an integral part of our daily lives and the future of automation.