Introduction

In the realm of modern robotics, sensor fusion technology is a fundamental enabler for achieving high-precision environmental perception. As robots are increasingly deployed in a wide array of applications—from autonomous vehicles and industrial automation to healthcare and service robotics—reliable and accurate perception of their environment is crucial for their effective operation. To accomplish this, robots often rely on the integration of multiple sensors that work together to provide richer, more accurate, and robust data than any single sensor could alone.

The ability to combine data from various sensors, such as LIDAR, radar, cameras, and inertial measurement units (IMUs), enables robots to better understand their surroundings, make intelligent decisions, and execute complex tasks with high precision. This approach, known as sensor fusion, is at the heart of autonomous navigation, object detection, motion tracking, and many other capabilities that modern robots possess.

In this article, we explore the principles behind sensor fusion technology, its applications in robotics, the challenges involved in implementing it, and the future directions of research and development. By understanding how sensor fusion can improve environmental perception, we gain insight into the evolution of intelligent robotic systems and their increasing role in industries that require high levels of autonomy and precision.

What is Sensor Fusion?

Defining Sensor Fusion

Sensor fusion refers to the process of integrating data from multiple sensors to improve the accuracy, reliability, and completeness of environmental perception. This integration is achieved by combining complementary sensor modalities to compensate for the weaknesses of individual sensors, enhancing the overall performance of the robotic system.

Robots rely on a variety of sensors to gather information about the environment, including:

- Visual sensors (e.g., cameras) for capturing images and video data

- LIDAR (Light Detection and Ranging) for creating detailed 3D maps of the surroundings

- Radar for detecting objects and measuring their velocity, particularly in challenging weather conditions

- Ultrasonic sensors for proximity detection and obstacle avoidance

- IMUs (Inertial Measurement Units) for tracking motion and orientation

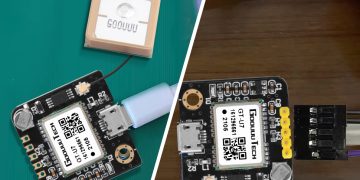

- GPS for geospatial location information in outdoor environments

Each sensor has unique strengths and limitations. For example, cameras provide rich visual data but are sensitive to lighting conditions, while LIDAR offers precise distance measurements but may struggle in heavy rain or fog. Radar, on the other hand, can work well in low-visibility conditions, but lacks the resolution of LIDAR.

By combining data from multiple sensors through fusion algorithms, robots can overcome these limitations and achieve more robust environmental perception, leading to enhanced autonomy, safety, and efficiency.

Types of Sensor Fusion

There are various techniques and approaches to sensor fusion, depending on the level of data integration and the specific requirements of the application. These can be broadly categorized into the following types:

- Low-Level Fusion (Feature-Level Fusion): In this approach, raw sensor data is processed to extract features (e.g., edges, textures, or key points) before being combined. Feature-level fusion is often used when the sensors provide complementary data that needs to be fused at an early stage, such as in camera-LIDAR fusion for object detection in autonomous vehicles.

- Mid-Level Fusion (Data-Level Fusion): In this method, sensor data is processed at a relatively low level (e.g., pixel data from a camera or distance measurements from a LIDAR) and then fused. This approach typically involves combining data from different sensors to create a more complete representation of the environment. A good example is the fusion of IMU data with GPS data for vehicle localization.

- High-Level Fusion (Decision-Level Fusion): This approach combines data from multiple sensors at the decision-making stage. In high-level fusion, sensors may each contribute to a different part of the decision process, such as path planning or obstacle avoidance in autonomous robots.

- Kalman Filter-Based Fusion: One of the most widely used techniques for sensor fusion, particularly for motion tracking and state estimation, is the Kalman Filter. This mathematical algorithm combines sensor data over time to estimate the most probable state of a system, such as the position and velocity of a robot.

How Sensor Fusion Enhances Robot Perception

1. Improved Accuracy and Reliability

Each sensor has its own set of limitations, such as noise, environmental interference, and sensor-specific errors. Sensor fusion mitigates these issues by leveraging the complementary strengths of each sensor. For example:

- LIDAR provides precise distance measurements but can struggle with reflective surfaces.

- Cameras provide detailed image data but can be affected by poor lighting conditions.

- Radar excels in low-visibility conditions but has relatively low resolution.

By fusing these data sources, the robot can accurately detect objects, map its environment, and track its motion without being overwhelmed by the weaknesses of any one sensor. This leads to better decision-making and more reliable operation.

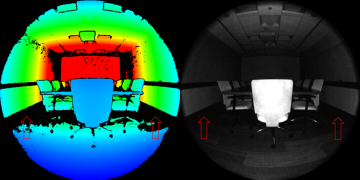

2. Enhanced Environmental Mapping

One of the most crucial applications of sensor fusion is in creating accurate, high-resolution maps of the robot’s environment. For autonomous navigation, robots often rely on Simultaneous Localization and Mapping (SLAM) techniques, which involve using sensor data to build and update a map of the surroundings while simultaneously tracking the robot’s position within that map.

By fusing data from LIDAR, cameras, and IMUs, a robot can construct highly detailed 3D maps of its environment, which are essential for tasks such as path planning, obstacle avoidance, and navigation in dynamic environments.

3. Obstacle Detection and Avoidance

For robots operating in dynamic environments, the ability to detect obstacles and avoid collisions is essential. Sensor fusion enhances obstacle detection by combining data from various sensors:

- Radar can detect obstacles even in challenging weather, such as fog or heavy rain.

- Cameras can provide detailed recognition of objects, such as pedestrians, vehicles, or walls.

- Ultrasonic sensors can detect close-range obstacles and assist in precise movement control.

By fusing data from these sensors, robots can achieve real-time obstacle detection and collision avoidance, ensuring safe and effective movement.

4. Localization and Position Tracking

Accurate localization is fundamental to autonomous systems, particularly in autonomous vehicles, drones, and mobile robots. Robots use sensors like GPS, IMUs, and LIDAR to track their position within a specific environment. Sensor fusion helps combine these data sources to achieve higher precision and compensate for inaccuracies, such as GPS drift or IMU bias.

- GPS provides global position data but may be inaccurate in indoor or GPS-denied environments.

- IMUs track changes in orientation and movement but suffer from drift over time.

- LIDAR provides precise distance measurements to objects, which helps refine localization.

By fusing these data sources, robots can achieve high-precision localization, even in challenging environments.

Applications of Sensor Fusion in Robotics

1. Autonomous Vehicles

Autonomous vehicles rely heavily on sensor fusion to navigate safely and effectively. By integrating LIDAR, radar, cameras, and IMUs, autonomous vehicles can perceive their environment in three dimensions, detect obstacles, and plan safe driving paths. Sensor fusion plays a critical role in:

- Real-Time Mapping: Creating detailed, up-to-date maps of the environment.

- Obstacle Detection: Identifying and avoiding pedestrians, other vehicles, and road hazards.

- Localization: Ensuring precise vehicle positioning, even in GPS-denied environments like urban canyons.

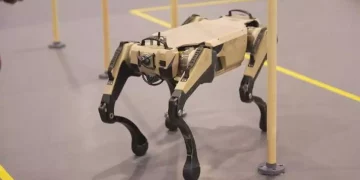

2. Robotic Manufacturing and Automation

In industrial robotics, sensor fusion is used to enhance precision and efficiency in manufacturing processes. Robots in manufacturing environments often rely on multiple sensors to ensure accuracy during assembly, quality control, and material handling. For example:

- Vision systems (cameras) are used to inspect parts for defects.

- Force sensors provide feedback on grip strength during handling.

- LIDAR helps with navigation and obstacle avoidance.

Sensor fusion allows these systems to work together seamlessly, improving productivity and quality control.

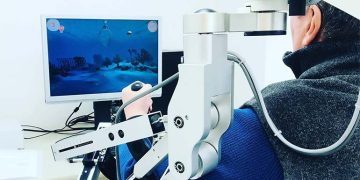

3. Healthcare Robotics

In the healthcare industry, sensor fusion enables robots to assist in surgery, rehabilitation, and patient care. For example:

- Surgical robots combine visual data from cameras with force feedback to perform delicate procedures with precision.

- Robotic exoskeletons use a combination of IMUs, pressure sensors, and position sensors to assist patients with mobility, providing real-time feedback to adjust movement patterns and prevent injury.

4. Drones and Aerial Robotics

Drones use sensor fusion to navigate complex environments, track moving targets, and ensure stable flight in various conditions. By combining data from IMUs, GPS, vision systems, and LIDAR, drones can perform tasks such as mapping, inspection, and delivery with high precision.

- SLAM (Simultaneous Localization and Mapping) enables drones to create maps of indoor environments and navigate without relying on GPS.

- Obstacle avoidance systems help drones avoid collisions with buildings, trees, and other obstacles.

Challenges in Sensor Fusion for Robotics

1. Data Synchronization and Calibration

One of the biggest challenges in sensor fusion is ensuring that data from different sensors are synchronized and calibrated properly. If the data from different sensors are not aligned in time or space, the fusion process will lead to inaccurate results. Calibration is critical to ensure that data from different sensors can be accurately combined.

2. Handling Sensor Noise and Uncertainty

Sensors are often subject to noise and uncertainty, especially in dynamic environments. For example, LIDAR may suffer from errors caused by reflective surfaces, while cameras may struggle with low-light conditions. Filtering techniques, such as the Kalman Filter, help to mitigate sensor noise and reduce uncertainty during fusion, but these methods must be carefully tuned to achieve optimal performance.

3. Computational Complexity

Sensor fusion algorithms can be computationally intensive, particularly when dealing with large amounts of data from multiple sensors in real-time. Efficient processing and data reduction techniques are needed to ensure that fusion algorithms can operate on robots with limited processing power without sacrificing performance.

Future Directions in Sensor Fusion for Robotics

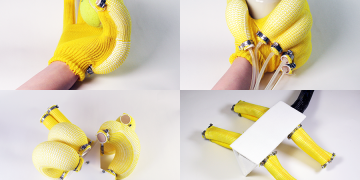

As sensor technologies and fusion algorithms continue to improve, the potential applications for sensor fusion in robotics will expand. Future developments may include:

- More advanced sensor fusion algorithms that use deep learning and AI to improve accuracy and decision-making.

- The integration of wearable sensors to enable human-robot collaboration and enhanced assistive robotics.

- The use of sensor networks to provide more comprehensive environmental data for autonomous systems.

Conclusion

Sensor fusion technology is a cornerstone of modern robotics, enabling high-precision environmental perception and enhancing the capabilities of robots across industries. By combining data from multiple sensors, robots can achieve more accurate, reliable, and robust understanding of their surroundings, which is essential for tasks such as autonomous navigation, object detection, and motion tracking.

Despite the challenges associated with data synchronization, sensor noise, and computational complexity, the continued advancement of sensor fusion algorithms and sensor technologies promises to drive significant improvements in robotic autonomy. As sensor fusion becomes more sophisticated, we can expect robots to become even more intelligent, adaptive, and capable of performing tasks with greater precision and efficiency in a wide range of real-world applications.